PaaS to AKS: ARM for EDS

This blog post is part of a series about building a production ready Continuous Integration setup for Sitecore using K8s containers on AKS. For more information and other articles on this subject check out the series index.

External Data Services

A K8s deployment to AKS requires a number of so called external data services (EDS) that do not run, or should not run, in a container:

- SQL Server or an Azure Elastic Database pool

- Solr Cloud 8.4.0

- Redis 5.0 or higher

To reduce the time required for development and testing, Sitecore provided those services in a container, but those are meant for non-production use only. You can create those services manually in the Azure portal, or run a VM to install all of them, but that wouldn’t meet our Infrastructure-as-Code requirement or satisfy our preference for PaaS.

Also, we’d like to throw in some extra services that could be seen as EDS and support our container deployment, to increase security and authentication for our production environment:

- Azure Container Registry (ACR) to host our images privately

- Azure Key Vault for secure key management

ARM Templates for EDS boilerplate

If those containers aren’t suitable for a production setup, you might wonder how to host those External Data Services outside the Kubernetes cluster.

I think it would be great if we had a default template or boilerplate that provides the required services to support your K8s deployment, much like the Sitecore Azure Quickstart Templates for a full PaaS Web Apps deployment. So that is exactly what we have created! (*)

We chose an elastic database pool running on SQL PaaS instances, a PaaS Redis service, as well as the native Azure ACR and AKV services. The Solr instance is the only exception to the PaaS principle: due to cost considerations we are running a Solr Cloud instance using ZooKeeper on a VM (but fully scripted of course). Additionally, we deploy a VNet to aid in setting up the traffic between our containers and our EDS. You can find the templates and accompanying scripts in my GitHub repository:

https://github.com/robhabraken/paas-to-aks/tree/main/azure/templates

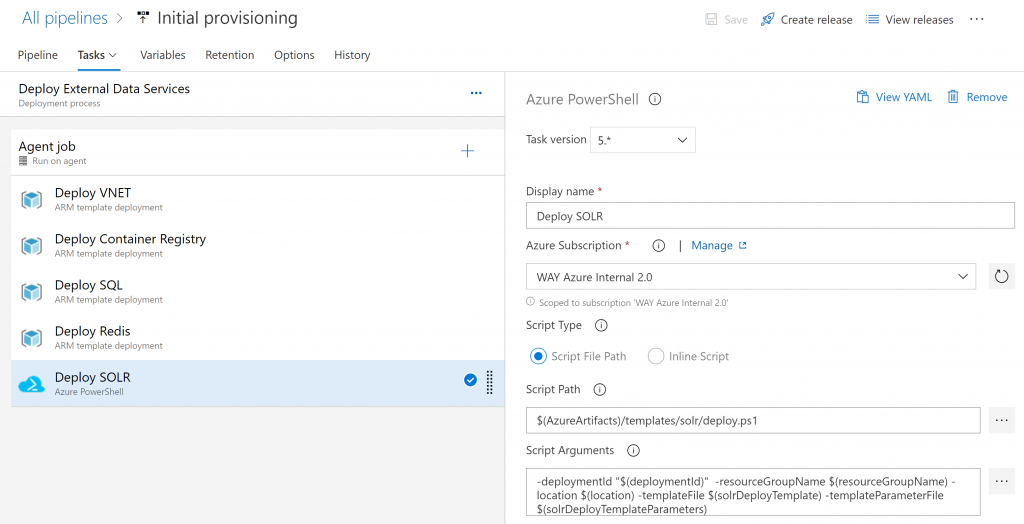

Which can be easily deployed using a number of tasks in Azure DevOps:

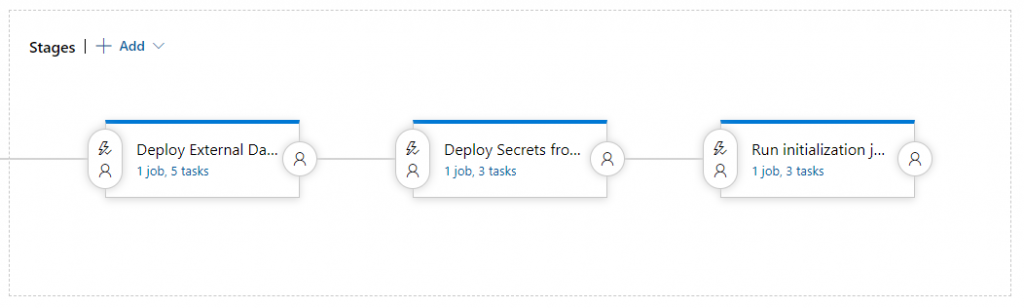

This by itself is one of the three stages of our Initial Provisioning pipeline, followed by deploying the secrets as described in my previous post, and the initialization jobs provided by Sitecore to initialize the EDS:

When fully developed I will also add a YAML based pipeline for Azure DevOps to the aforementioned GitHub repository.

Fixing the hard-coded Redis connection

There’s one issue though, if you are running the EDS outside of the AKS cluster. When you go into the cd.yaml file of the Kubernetes specification files as provided by Sitecore, you will see that the connection string to Redis is hard-coded:

|

1 2 3 4 |

- name: Sitecore_ConnectionStrings_Redis.Sessions value: redis:6379,ssl=False,abortConnect=False |

You can of course change it in here, but I think it is more elegant to remove the value from the specification files and place it inside a secrets file, as is being done with the Solr connection string for example. Just add this little code snippet into the YAML file:

|

1 2 3 4 5 6 7 |

- name: Sitecore_Redis_Connection_String valueFrom: secretKeyRef: name: sitecore-redis key: sitecore-redis-connection-string.txt |

Change the hard-coded connection string value to:

|

1 2 3 4 |

- name: Sitecore_ConnectionStrings_Redis.Sessions value: $(Sitecore_Redis_Connection_String) |

Add a file named “sitecore-redis-connection-string.txt” into the ./secrets folder and add a reference to it in the kustomization.yaml file:

|

1 2 3 4 5 |

- name: sitecore-redis files: - sitecore-redis-connection-string.txt |

Subsequently, you can choose to retrieve this value either from an Azure DevOps Variable group, or by using a secret from your AKV instance.

Allow AKS to access the EDS

Inbound security rule for Solr NSG

To allow for the K8s cluster to access our Solr Cloud instance, we need to add the IP addresses of the Frontend IP configuration of the “kubernetes” load balancer resource to the Inbound security rules of the NSG that is part of our VNet as created by our ARM templates. You can find the load balancer in the additional resource group created by the AKS deployment, which in our case is named “MC_paas-to-aks_paas-to-aks-aks-cluster_westeurope”, containing the underlying infrastructure for our Kubernetes service.

Network configuration for SQL Server

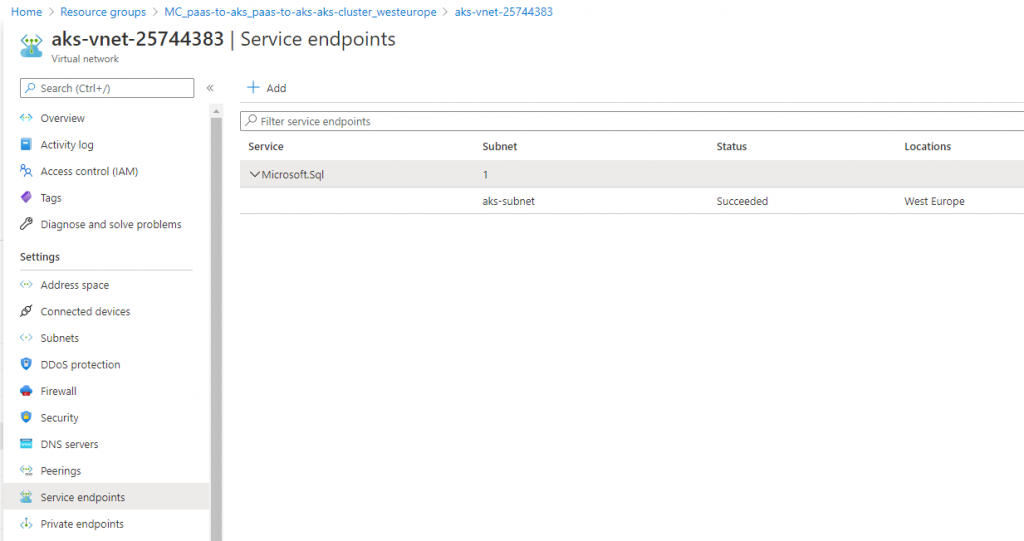

The VNet of this supporting resource group also contains a subnet called “aks-subnet” that encloses all of the resource of the K8s cluster. To allow communication between the cluster and SQL, you need to do some extra configuration. On the AKS VNet you need to Add a Service endpoint for the Microsoft.Sql service bound to the AKS subnet:

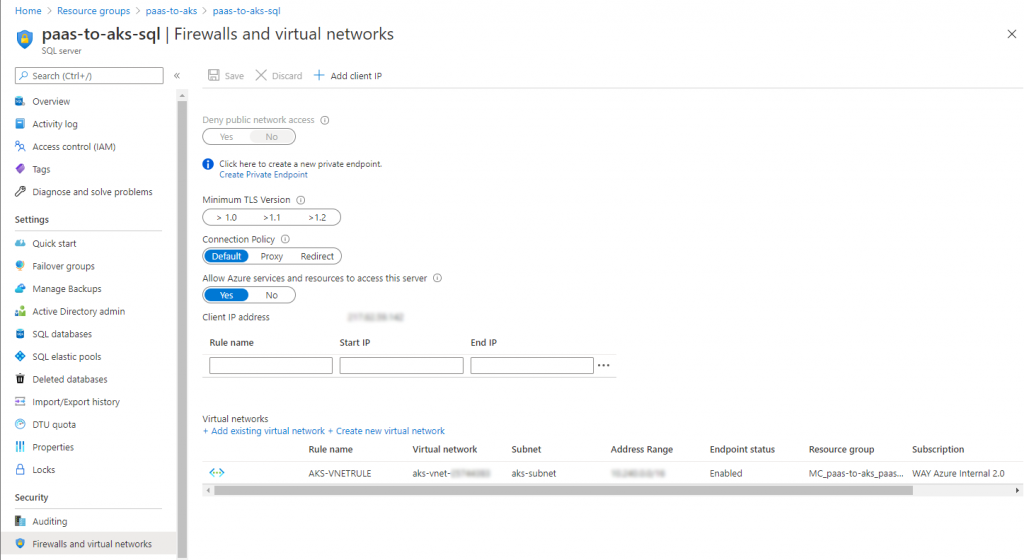

And within your SQL Server resource, you need to go to Firewalls and virtual networks and add a VNet rule to add the AKS VNet and its subnet to enable the Service endpoint connection going into your SQL Server instance:

At the time of writing of this blog post, we have configured the network manually, since we are still testing our setup. This will suffice for the time being, but upon finalizing our setup, this configuration will of course be added to the templates & scripts of our EDS boilerplate.

(*) Please note that this template is still considered being experimental. Although it works as is, it is still subject to change while we’re testing it in various scenarios. It will probably be updated shortly together with new blog posts within this series.

Comments

Comments are disabled for this post